February 13, 2024

When a human-AI conversation involves many rounds of continuous dialogue, the powerful large language machine-learning models that drive chatbots like ChatGPT sometimes start to collapse, causing the bots’ performance to rapidly deteriorate.

A team of researchers from MIT and elsewhere has pinpointed a surprising cause of this problem and developed a simple solution that enables a chatbot to maintain a nonstop conversation without crashing or slowing down.

Their method involves a tweak to the key-value cache (which is like a conversation memory) at the core of many large language models. In some methods, when this cache needs to hold more information than it has capacity for, the first pieces of data are bumped out. This can cause the model to fail.

By ensuring that these first few data points remain in memory, the researchers’ method allows a chatbot to keep chatting no matter how long the conversation goes.

Complete article from MIT News.

Explore

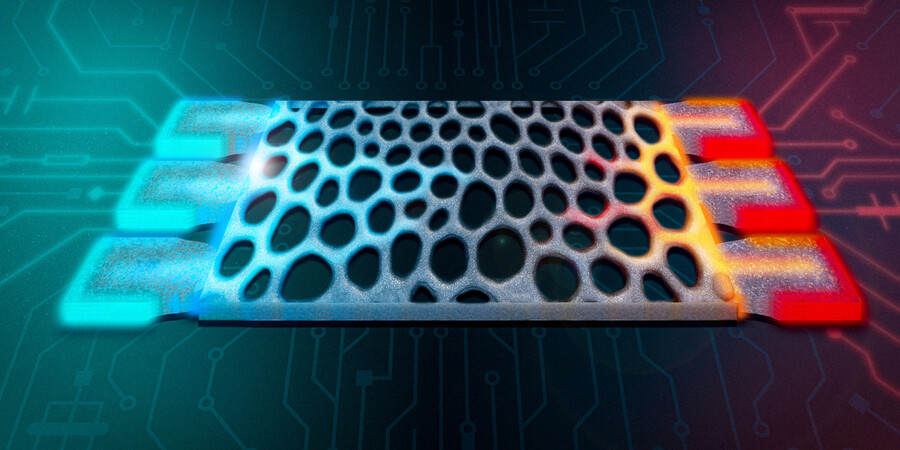

New Photonic Device Efficiently Beams Light into Free Space

Adam Zewe | MIT News

Light-emitting structures that curl off the chip surface could enable advanced displays, high-speed optical communications, and larger-scale quantum computers.

New Method Could Increase LLM Training Efficiency

Adam Zewe | MIT News

By leveraging idle computing time, researchers can double the speed of model training while preserving accuracy.

MIT Engineers Design Structures that Compute with Heat

Adam Zewe | MIT News

By leveraging excess heat instead of electricity, microscopic silicon structures could enable more energy-efficient thermal sensing and signal processing.